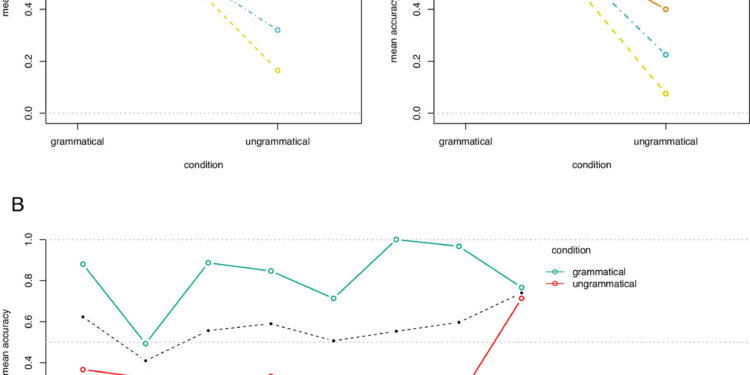

(A) Average accuracy per condition and model: (A1) individual responses; (A2) preferred answers per sentence. (B) Average precision per phenomenon and condition. The dotted black line indicates the average accuracy of each phenomenon in the two conditions. Credit: Proceedings of the National Academy of Sciences (2023). DOI: 10.1073/pnas.2309583120

A study carried out by researchers from UAB and URV and published in PNAS shows that humans recognize grammatical errors in a sentence, while AI does not. The researchers compared the skills of humans and the three best major language models currently available.

Language is one of the main characteristics that differentiates human beings from other species. Its origin, how it is learned, and why people were able to develop such a complex communication system have raised many questions for linguists and researchers from a wide variety of research fields.

In recent years, considerable progress has been made in computer language teaching, leading to the emergence of so-called large language models, technologies trained with enormous amounts of data that are the basis of certain artificial intelligence (AI) applications. : for example, search engines, automatic translators or audio-text converters.

But what are the language skills of these models? Can they be compared to those of a human being? A research team led by the URV with the participation of the Humboldt University of Berlin, the Autonomous University of Barcelona (UAB) and the Catalan Institute for Research and Advanced Studies (ICREA) tested these systems to check if their language skills can be compared to those of people. To do this, they compared the skills of humans and the three best major language models currently available: two based on GPT3 and one (ChatGPT) based on GP3.5.

They were given a simple task: They were asked to determine on the spot whether a wide variety of sentences were grammatically well-formed in their native language. The humans who participated in this experiment and the language models were asked a very simple question: “Is this sentence grammatically correct?”

The results showed that humans answered correctly, while the large language models gave many wrong answers. In fact, they adopted a default strategy of answering “yes” most of the time, whether the answer was correct or not.

“The result is surprising, since these systems are formed on the basis of what is grammatically correct or not in a language,” explains Vittoria Dentella, researcher at the Department of English and German Studies, who led the study. Human raters explicitly train these large language models on the grammaticality of constructions they may encounter.

Thanks to a learning process reinforced by human feedback, these models receive examples of grammatically poorly constructed sentences and then the correct version. This type of teaching constitutes a fundamental element of their “training”. On the other hand, this is not the case in humans. “Although people who raise a baby occasionally correct the way they speak, they don’t do it constantly in any language community in the world,” she says.

The study therefore reveals that there is a double mismatch between humans and AI. People do not have access to “negative evidence” – about what is not grammatically correct in spoken language – unlike large models of language, thanks to human feedback. But despite this, models cannot recognize trivial grammatical errors, whereas humans can instantly and effortlessly.

“Developing useful and safe artificial intelligence tools can be very useful, but we need to be aware of their shortcomings. Since most AI applications depend on understanding commands given in natural language, determining their limited understanding grammar, as we did in this study, is of vital importance”, notes Evelina Leivada, ICREA research professor in the Department of Catalan Studies at UAB.

“These results suggest that we need to think critically about whether AIs actually possess language skills similar to those of humans,” concludes Dentella, who considers that adopting these language models as theories of human language is not justified at their current stage of development.

More information:

Vittoria Dentella et al, Systematic testing of three linguistic models reveals low linguistic accuracy, lack of response stability and yes response bias, Proceedings of the National Academy of Sciences (2023). DOI: 10.1073/pnas.2309583120

Provided by the Autonomous University of Barcelona

Quote: Research shows artificial intelligence fails at grammar (January 11, 2024) retrieved January 11, 2024 from

This document is subject to copyright. Apart from fair use for private study or research purposes, no part may be reproduced without written permission. The content is provided for information only.