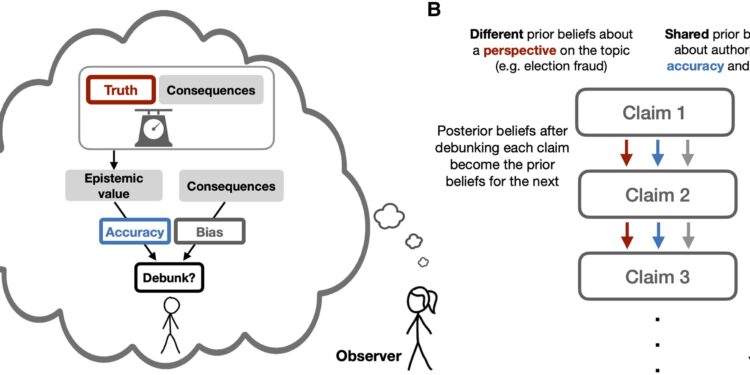

A) Conceptual representation of the inverse planning model and B) schematic of the model simulations. In each pair of simulations, two subgroups initially have different beliefs about a topic, but share common beliefs about the authority’s motivations and biases. After observing each of the authority’s debunking decisions (i.e., debunking a viewpoint’s assertions), observers use their mental model of the authority’s decision-making to simultaneously update their beliefs about the perspective (i.e. its truth) and the correctness of the authority’s motives and biases. The observers’ posterior beliefs after each debunking decision then serve as prior beliefs to judge the next decision. Credit: Nexus PNAS (2024). DOI: 10.1093/pnasnexus/pgae393

When the outcome of an election is contested, people skeptical of the outcome may be swayed by authority figures who come down on one side or the other. These personalities can be independent observers, political figures or news agencies. However, these “demystification” efforts do not always have the desired effect, and in some cases they can cause people to cling more tightly to their original position.

Neuroscientists and political scientists from MIT and the University of California, Berkeley, created a computer model that analyzes the factors that help determine whether debunking efforts will persuade people to change their beliefs about the legitimacy of an election. Their results suggest that although debunking fails most of the time, it can succeed under the right conditions.

For example, the model showed that successful debunking is more likely if people are less sure of their initial beliefs and if they believe the authority is impartial or strongly motivated by a desire for accuracy. It also helps when an authority comes out in favor of an outcome that goes against a perceived bias: for example, Fox News declaring that Joseph R. Biden had won in Arizona at the 2020 US presidential election.

“When people see an act of debunking, they treat it as a human action and understand it the same way they understand human actions, that is, as something someone did for their own reasons,” says Rebecca Saxe, the John W. Jarve Professor of Brain and Cognitive Sciences, a member of MIT’s McGovern Institute for Brain Research and lead author of the study. “We used a very simple general model of how people understand the actions of others, and found that that’s all you need to describe this complex phenomenon.”

These results could have implications as the United States prepares for the November 5 presidential election, because they help reveal the conditions that would be most likely to lead people to accept the election outcome.

Setayesh Radkani, an MIT graduate student, is the lead author of the paper, which appears in a special election-themed issue of the journal. Nexus PNAS. Marika Landau-Wells, Ph.D., a former MIT postdoctoral fellow and now an assistant professor of political science at the University of California, Berkeley, is also an author of the study.

Modeling motivation

In its work on debunking elections, the MIT team took a novel approach, building on Saxe’s extensive work studying “theory of mind”—the way people think about thoughts and actions. motivations of others.

As part of his doctorate. In his dissertation, Radkani developed a computational model of the cognitive processes that occur when people see others being punished by an authority. Not everyone interprets punitive actions the same way, depending on their prior beliefs about action and authority. Some may view the authority as acting legitimately to punish wrongdoing, while others may view it as going too far in inflicting unjust punishment.

Last year, after attending an MIT workshop on the topic of societal polarization, Saxe and Radkani had the idea to apply the model to how people respond to authority that attempts to influence their political beliefs. They enlisted Landau-Wells, who earned his Ph.D. in political science before working as a postdoctoral fellow in Saxe’s lab, to join their efforts, and Landau-Wells suggested applying the model to debunk beliefs about the legitimacy of an election outcome.

The computer model Radkani created is based on Bayesian inference, which allows the model to continually update its predictions about people’s beliefs as they receive new information. This approach views debunking as an action that a person takes for their own reasons. People observing the authority’s statement then make their own interpretation of why the person said what they did. Based on this interpretation, people may or may not change their own beliefs regarding the outcome of the election.

Additionally, the model does not assume that beliefs are necessarily incorrect or that a group of people are acting irrationally.

“The only assumption we’ve made is that there are two groups in society that differ in their views on a subject: one of them thinks the election was stolen and the other group doesn’t think “, says Radkani. “Other than that, these groups are similar. They share their beliefs about authority: what the different motives for authority are and the extent to which authority is motivated by each of those motives.”

The researchers modeled more than 200 different scenarios in which an authority attempts to debunk a group’s belief regarding the validity of an election result.

Each time they ran the model, the researchers varied the certainty levels of each group’s original beliefs, and they also varied the groups’ perceptions of the authority’s motivations. In some cases, groups believed that authority was motivated by promoting accuracy, and in others, this was not the case. The researchers also changed the groups’ perceptions of whether authority was biased toward a particular point of view, and the extent to which the groups believed those perceptions.

Building consensus

In each scenario, the researchers used the model to predict how each group would react to a series of five statements made by an authority trying to convince them that the election had been legitimate. The researchers found that in most of the scenarios they examined, beliefs remained polarized and, in some cases, became even more polarized. This polarization could also extend to new topics unrelated to the initial context of the election, the researchers found.

However, in some circumstances, debunking has been successful and beliefs have converged toward an accepted outcome. This was more likely to happen when people were initially more uncertain about their initial beliefs.

“When people are very, very certain, they become difficult to move. So, in essence, a lot of this demystification of authority doesn’t matter,” says Landau-Wells. “However, many people fall into this band of uncertainty. They have doubts, but they don’t have firm convictions. One of the lessons of this article is that we are in a space where the model says that you can affect people’s beliefs and move them towards true things.”

Another factor that can lead to belief convergence is that people believe that authority is impartial and strongly motivated by accuracy. This is even more compelling when an authority makes a claim that goes against their perceived bias – for example, Republican governors say the election in their state was fair even though the Democratic candidate won.

As the 2024 presidential election approaches, efforts have been made on the ground to train nonpartisan election observers who can ensure the legitimacy of an election. These types of organizations could be well-positioned to help influence people who may have doubts about the legitimacy of the election, researchers say.

“They’re trying to train people to be independent, impartial and committed to the truth of the outcome more than anything else. Those are the type of entities you want. We want them to succeed in being seen as independent. We We want to allow them to be seen as truthful, because in this space of uncertainty, these are the voices that can move people toward a specific outcome,” says Landau-Wells.

More information:

Setayesh Radkani et al, How Rational Inference on Authority Debunking Can Reduce, Maintain, or Propagate Belief Polarization, Nexus PNAS (2024). DOI: 10.1093/pnasnexus/pgae393

Provided by the Massachusetts Institute of Technology

This story is republished courtesy of MIT News (web.mit.edu/newsoffice/), a popular site that covers news about MIT research, innovation and education.

Quote: Model reveals why debunking election misinformation often doesn’t work (October 15, 2024) retrieved October 15, 2024 from

This document is subject to copyright. Except for fair use for private study or research purposes, no part may be reproduced without written permission. The content is provided for informational purposes only.