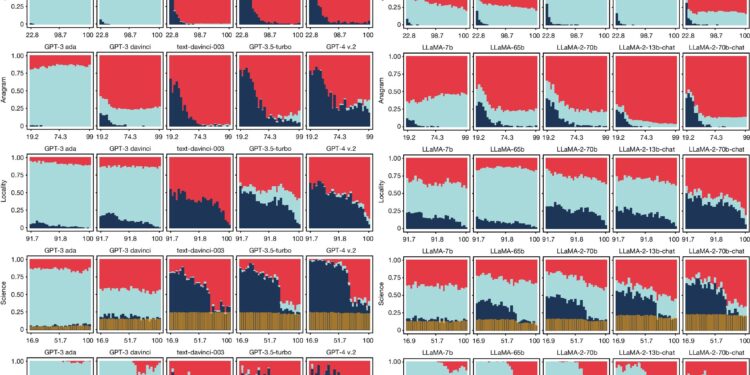

Performance of a selection of GPT and LLaMA models with increasing difficulty. Credit: Nature (2024). DOI: 10.1038/s41586-024-07930-y

A team of AI researchers from the Universitat Politècnica de València, Spain, has discovered that as popular LLMs (Large Language Models) become larger and more sophisticated, they are less likely to admit to a user that ‘They don’t know the answer.

In their study published in the journal NatureThe group tested the latest version of three of the most popular AI chatbots for their responses, accuracy, and users’ ability to spot wrong answers.

As LLMs became commonplace, users became accustomed to using them to write articles, poems or songs and solve math problems and other tasks, and the question of accuracy became a more problematic issue. important. In this new study, researchers wondered whether the most popular LLMs became more accurate with each new update and what they did when they were wrong.

To test the accuracy of three of the most popular LLMs, BLOOM, LLaMA and GPT, the group asked them thousands of questions and compared the answers they received with earlier versions’ answers to the same questions.

They also varied the themes, including mathematics, science, anagrams and geography, as well as LLMs’ ability to generate text or perform actions such as sorting a list. For all questions, they first assigned a degree of difficulty.

They found that with each new iteration of a chatbot, accuracy generally improved. They also found that as the questions became more difficult, accuracy decreased, as expected. But they also found that as LLMs became larger and more sophisticated, they tended to be less open about their own ability to answer a question correctly.

In earlier versions, most LLMs responded by telling users that they couldn’t find the answers or needed more information. In newer versions, LLMs were more likely to guess, leading to more answers overall, both correct and incorrect. They also found that all LLMs sometimes produced incorrect answers, even to easy questions, suggesting that they are still unreliable.

The research team then asked volunteers to rate the answers from the first part of the study as correct or incorrect and found that most had difficulty spotting the incorrect answers.

More information:

Lexin Zhou et al, Larger, more instructable language models become less reliable, Nature (2024). DOI: 10.1038/s41586-024-07930-y

© 2024 Science X Network

Quote: As LLMs Grow, They Are More Likely to Give Wrong Answers Than Admit Their Ignorance (September 27, 2024) retrieved September 27, 2024 from

This document is subject to copyright. Except for fair use for private study or research purposes, no part may be reproduced without written permission. The content is provided for informational purposes only.