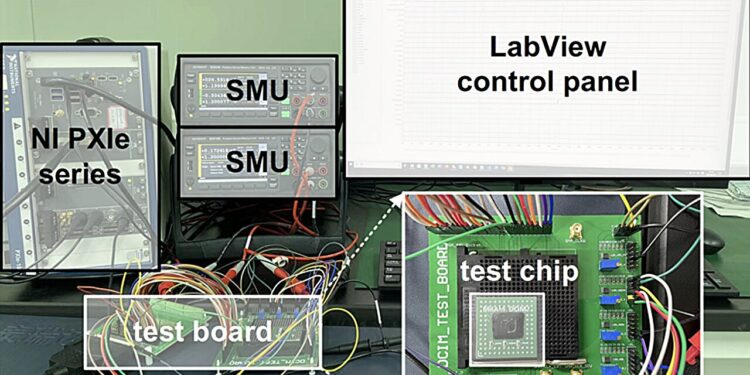

The experimental and measurement platform the team used to evaluate the nvDCIM chips. Credit: Natural electronics (2025). DOI: 10.1038/s41928-025-01479-y

To make accurate predictions and reliably accomplish desired tasks, most artificial intelligence (AI) systems must quickly analyze large amounts of data. This currently involves the transfer of data between processing and memory units, which are separate in existing electronic devices.

In recent years, many engineers have attempted to develop new hardware that can run AI algorithms more efficiently, known as computing-in-memory (CIM) systems. CIM systems are electronic components capable of both performing calculations and storing information, typically serving as both processors and non-volatile memories. Non-volatile basically means that they can retain data even when they are turned off.

Most previously introduced CIM designs rely on analog computing approaches, which allow devices to perform calculations by harnessing electrical current. Despite their good energy efficiency, analog computing techniques are known to be significantly less precise than digital computing methods and often fail to reliably handle large AI models or large amounts of data.

Researchers from Southern University of Science and Technology, Xi’an Jiaotong University and other institutes recently developed a promising new CIM chip that could help run AI models faster and with greater energy efficiency.

Their proposed system, described in a paper published in Natural electronicsis based on spin transfer torque magnetic random access memory (STT-MRAM), a spintronic device capable of storing binary units of information (i.e., 0s and 1s) in the magnetic orientation of one of its underlying layers.

Using spintronics to run AI more efficiently

STT-MRAM devices, like the one used by this research team, essentially consist of a tiny structure known as a magnetic tunnel junction (MTJ). This structure has three layers, a magnetic layer with “fixed” orientation, a magnetic layer that can change orientation and a thin insulating layer which separates the other two layers.

When the two magnetic layers have parallel magnetic directions, electrons can easily pass through the device, but when they are opposite, the resistance increases and the flow of electrons becomes more difficult. STT-MRAM devices exploit these two different states to store binary data.

“Non-volatile CIM macros (i.e., pre-designed functional modules inside a chip that can both process and store data) can reduce data transfer between processing and memory units, thereby providing fast and energy-efficient artificial intelligence calculations,” Humiao Li, Zheng Chai and colleagues wrote in their paper.

“However, non-volatile CIM architecture typically relies on analog computation, which is limited in terms of accuracy, scalability, and robustness. We report a 64 KB non-volatile digital memory computation macro based on 40 nm STT-MRAM technology.”

A step towards more scalable AI hardware

The STT-MRAM-based module introduced by the researchers can perform calculations and store bits reliably, all in a single device. In initial tests, it performed remarkably well, running two distinct types of neural networks with remarkable speed and accuracy.

“Our macro includes in situ multiplication and digitization at the bit cell level, precisely reconfigurable digital addition and accumulation at the macro level, and a flip rate-aware training scheme at the algorithm level,” the authors wrote. “The macro supports lossless matrix-vector multiplications with flexible input and weight precisions (4, 8, 12, and 16 bits), and can achieve software-equivalent inference precision for a residual network with 8-bit precision and physics-informed neural networks with 16-bit precision.

“Our macro has computation latencies of 7.4 to 29.6 ns and power efficiencies of 7.02 to 112.3 tera-operations per second per watt for fully parallel matrix-vector multiplications in precision configurations ranging from 4 to 16 bits.”

In the future, the team’s newly developed CIM module could contribute to the energy-efficient deployment of AI directly on wearable devices, without the need for large data centers. In the coming years, this could also inspire the development of similar CIM systems based on STT-MRAMs or other spintronic devices.

Written for you by our author Ingrid Fadelli, edited by Lisa Lock, and fact-checked and edited by Robert Egan, this article is the result of painstaking human work. We rely on readers like you to keep independent science journalism alive. If this reporting interests you, consider making a donation (especially monthly). You will get a without advertising account as a thank you.

More information:

Humiao Li et al, A lossless and fully parallel spintronic memory calculation macro for artificial intelligence chips, Natural electronics (2025). DOI: 10.1038/s41928-025-01479-y

© 2025 Science X Network

Quote: AI efficiency advances thanks to a spintronic memory chip that combines storage and processing (October 29, 2025) retrieved October 30, 2025 from

This document is subject to copyright. Except for fair use for private study or research purposes, no part may be reproduced without written permission. The content is provided for informational purposes only.